- Blog

- Half life logo

- Book strategic relocation joel skousen massachusetts

- Labview queue

- Remove expired intools demo

- Musiq soulchild love you more

- Pinnacle studio 20 tutorial

- What does keys do plants zombies 2 xbox

- Spartacus nude

- Change what screen genius tablet sues

- Reset view photoshop cs6

- Diablo 2 hero editor 1-14d

- Vista telnet server

- Pencil 2d audio

- Filmora 8-7-0 registration code

- The alchemist cookbook seattle

- Paint tool sai lagging

- The death cure trailer

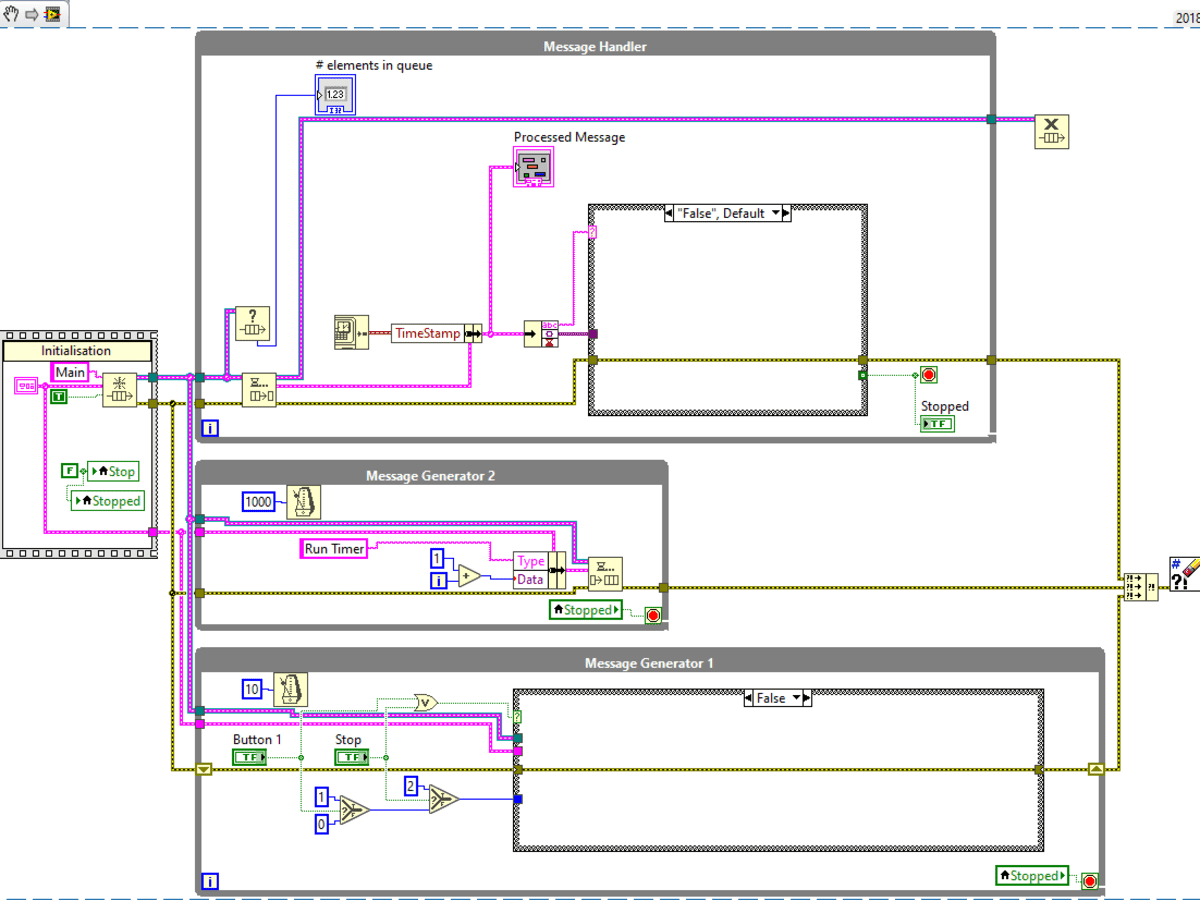

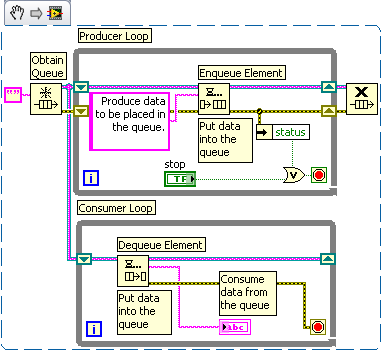

The alternative is to use something that integrates well with control events: user-defined events (UDEs). Thankfully, there is a simple solution to the problem: All you have to do is lose the queue… The Solution Likewise, you could put the dequeue operation into a timeout event case, but now you’re back to polling for data - the very situation you are wanting to avoid. You could have an event structure check for events when the dequeue operation terminates with a timeout, but then you run the risk of the GUI locking up if data is put into the queue so fast it never has the chance to time out. Although there are ways of making them work together, the “solutions” can have troublesome side effects. Queues can’t tell when events occur and events can’t trigger on the changes in queue status. The problem is that while queues and events are similar in that they are both ways of communicating that something happened by passing some data associated with that happening, they really operate in different worlds. Question: Do queues and control events play well together?

#Labview queue how to#

While it is true that you could create a third separate process just to handle the GUI, you are still left with the problem of how to communicate control inputs to the consumer loop - and perhaps the producer too. The other option of course is to implement some sort of polling scheme, which is at the very least, extremely inefficient. Question: What is the most efficient way of managing a user interface? So if the GUI is going to be handled by the consumer loop, we have a couple questions to answer. The only other alternative is to put this additional logic in the producer loop where, for example, asynchronous inputs from the operator could potentially interfere with your time-sensitive acquisition process. You see, when you consider all the other things that a program has to be able to do - like maintain a user interface, or start and stop itself in a systematic manner - much of that functionality ends up being in the consumer loop as well.

Unfortunately, in real-world applications this assumption is hardly ever true. The classic use case for this design pattern includes a consumer loop that does nothing but process data. However, LabVIEW doesn’t have the same limitations, so does it still make sense to implement this design pattern using queues? I would assert that the answer is a resounding “No” - which is really the point that the title of this post is making: While queues certainly work, they are not the technique that a LabVIEW developer who is looking at the complete problem would pick unless of course they were trying to blindly emulate another language. Remember that in those languages, the only real mechanism you have for passing data is a shared memory buffer, and given that you are going to have to write all the basic functionality yourself, you might as well do something that is going to be easy to implement, like a queue. Now if you are working in an unsophisticated language like C or any of its progeny, that choice makes sense. As it turns out, the producer-consumer pattern is very common in all languages - and they always use queues. Did you ever stop to ask yourself why? I did recently, so I decided to look into it. Now, the implementation of this design pattern that ships with LabVIEW uses a queue to pass the data between the two loops. Among the many advantages of this approach is that it deserializes the required operations and allows both tasks (acquisition and processing) to proceed in parallel.

To pass the data from the acquisition task to the processing task, the pattern uses some sort of asynchronous communications technique. You have one loop that does nothing but acquire the required data (the producer) and a second loop that processes the data (the consumer). The basic idea behind the pattern is simple and elegant. You will often hear it recommended on the user forum, and NI’s training courses spend a lot of time teaching it and using it. And one of the most commonly-used design patterns in LabVIEW is the producer/consumer loop. It’s not for nothing that people who program a lot spend a lot of time talking about design patterns: Design patterns are basic program structures that have proven their worth over time.